Redefining the Future of Banking: Technology

Against a backdrop of high operating costs and squeezed margins, technology has traditionally been viewed as a cost center by banks. Today, however, there is growing recognition of its strategic role in maintaining or bolstering operational resilience, reducing long-term operational costs, and enhancing revenue.

However, to fully leverage the potential of innovations such as generative and agentic AI, financial institutions must ensure they have the underlying technology foundations, supported by robust data environments, that such capabilities require.

Tighter regulatory oversight of operational resilience is also resetting technology priorities, with many focusing more on business outcomes, ensuring that technology investments truly add value and can evolve with changing regulatory and customer needs. In many cases, this is prompting a rethink of not just what to modernize, but how modernization itself should be tackled.

Ask, don’t navigate: generative AI transforms productivity

Like in many other sectors, natural language interfaces are revolutionizing productivity in banking. Generative AI is changing how business users interact with systems, replacing complex workflows and the need for multiple screens with conversational interfaces that turn data into real-time, actionable insights.

Instead of navigating endless menus or writing SQL queries, business users can ask for reports or actions in natural language and get immediate results. For example, asking the interface to “Identify customers aged 34–50 who opened a personal account and a mortgage before 2021” instantly surfaces relevant demographics for proactive engagement aimed at supporting financial wellbeing, strengthening customer relationships and potentially creating revenue opportunities. Crucially, the underlying logic or SQL is always visible, so outputs can be explained and audited.

In a legacy technology environment, answering this type of query would not be feasible, and could take weeks or even months of manual effort. By spending less time searching for information, financial institutions can act on insights and innovate faster, and respond to customer needs with greater impact.

Beyond analysis, this natural language approach will bleed into how digital experiences are created and adapted.

As global guidance and legislation continue to evolve, some financial institutions are gravitating to the popular open-source Model Context Protocol (MCP), which provides a mechanism for AI to tap into context and data from core systems and external services – without duplicating data or embedding logic into models. This decoupled approach means the AI only retrieves the information required for a given task when it is needed – strengthening governance and regulatory alignment.

Agentic AI drives operational autonomy

While generative AI creates content based on learned patterns, agentic AI combines these capabilities with goal-driven actions to perform tasks. This is fundamentally transforming banking workflows, with AI agents able to autonomously install, run, operate and upgrade systems coupled with the necessary human oversight.

As deployments accelerate, financial institutions are expected to see clearer, more measurable ROI from large‑scale agentic AI implementations.

In payments, AI agents can detect and repair broken transactions in real time, identifying causes such as formatting errors. With such issues much less reliant on manual intervention, more transactions can be processed using fewer resources.

In watchlist screening, AI agents can action and resolve alerts as they arise, reducing false positives and easing workloads. Low-risk alerts are automatically assessed and cleared, allowing teams to focus on higher-risk investigations.

According to industry research, banks are most open to using agentic AI for reducing labor costs in operations (86%), followed by support (75%), and maintenance (71%). On average, labor accounts for almost half (49%) of core banking total cost of ownership (TCO), making it the largest cost category, and, currently, the greatest opportunity for agentic AI to reduce TCO.[1]

These efficiencies free human staff to focus on tasks such as handling complex customer or operational cases, investing in R&D, evaluating growth strategies and delivering empathy and judgement that automation cannot entirely replace. When combined with appropriate human oversight, agentic AI minimizes downtime, strengthens operational resilience, and supports scalability.

Progressive modernization: A lower-risk path to core banking transformation

Core banking technology modernization is a high-stakes transformation. Banks are increasingly avoiding “big bang” implementations where entire core systems are replaced simultaneously.

This style of modernization is expensive, risky, and often fails. Instead, many are adopting progressive modernization, an approach where individual core components are upgraded gradually. With the evolution of integration frameworks, this approach has become more feasible as modern methods such as event-driven architecture, microservices, and domain-based decomposition are widely adopted.

Legacy core banking systems typically have a monolithic architecture. Components such as customer data, deposits, lending, and payments are tightly intertwined. As a result, there is a high risk of system-wide disruption when updating one component. A progressive modernization approach allows banks to gradually break apart the monolith by carving components into independent systems connected by application programming interfaces and events.

This approach greatly reduces the risk of system-wide disruption during transformation. Rather than taking a multi-year, all-or-nothing gamble, banks can prioritize modernizing higher-priority components such as lending while leaving other core components unchanged until needed.

As decomposition progresses, the approach delivers business value earlier and more frequently. Investment is also spread over time, accelerating ROI as components go live sooner than in a traditional core replacement.

However, this approach can introduce challenges, including:

- Extended transition: Old and new systems coexist for a longer period, increasing complexity in the interim.

- Leadership fatigue: Multi-year transformations test management patience, and stopping midstream can leave technology in an even more complex state.

- Operational shift: IT teams must run more standalone systems and increase investment in systems integration.

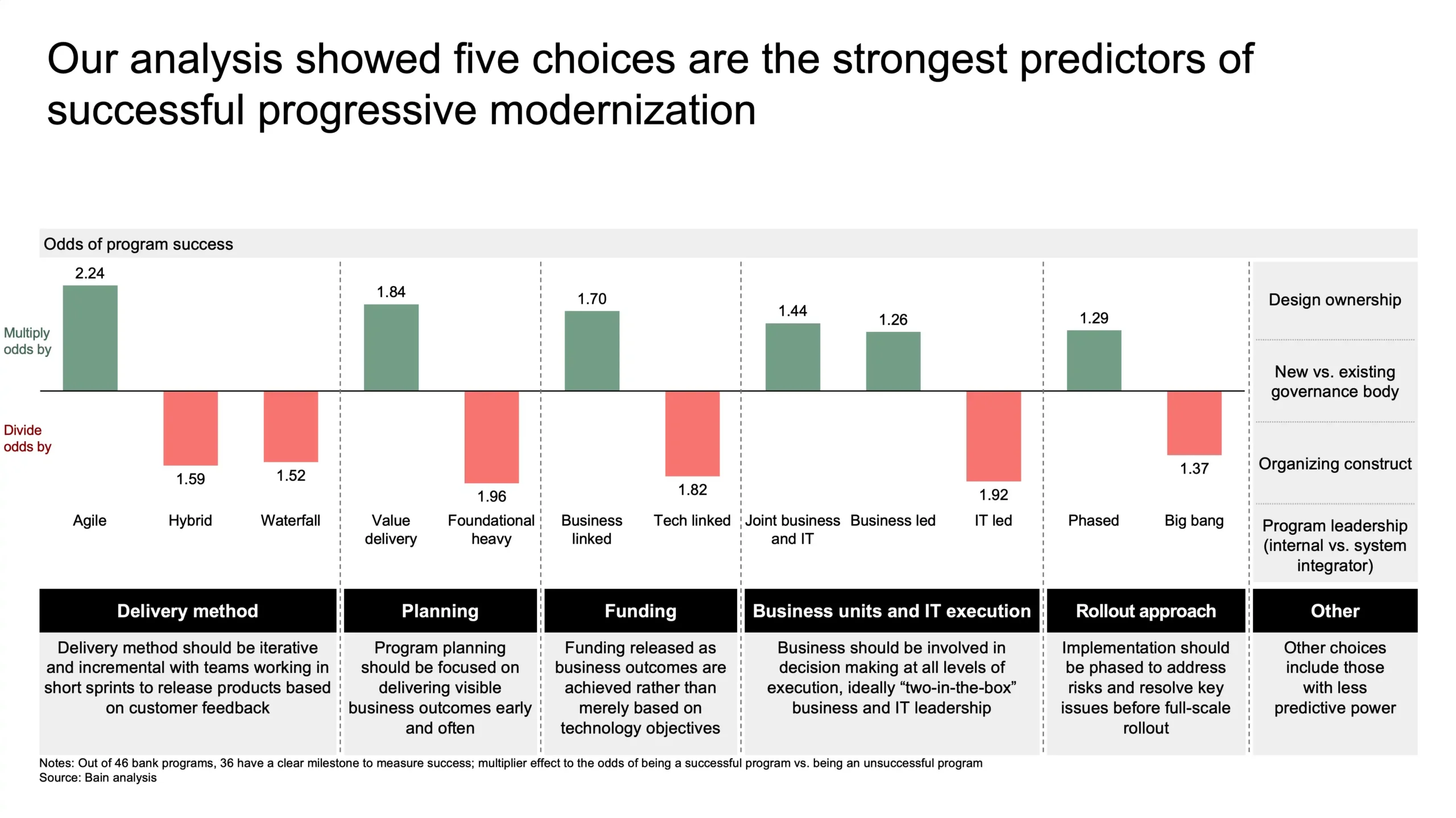

Bain & Company’s analysis of over 45 core programs found that progressive modernization is one of the top five predictors of replacement success, balancing transformation with business continuity. For banks that plan for coexistence and integration, progressive modernization offers a lower-risk path to a modern core.

By: Bain & Company

Cloud and data mesh underpin the “intelligent bank”

Banks across segments are leveraging cloud platforms and SaaS to renovate fragmented back-office systems and scale for the huge volumes of data required for real-time insights and AI-driven services.

Cloud-native architectures provide the elasticity, resilience, and processing power needed to handle high-frequency data flows, while SaaS solutions remove reliance on legacy infrastructure and enable financial institutions to deploy new capabilities faster.

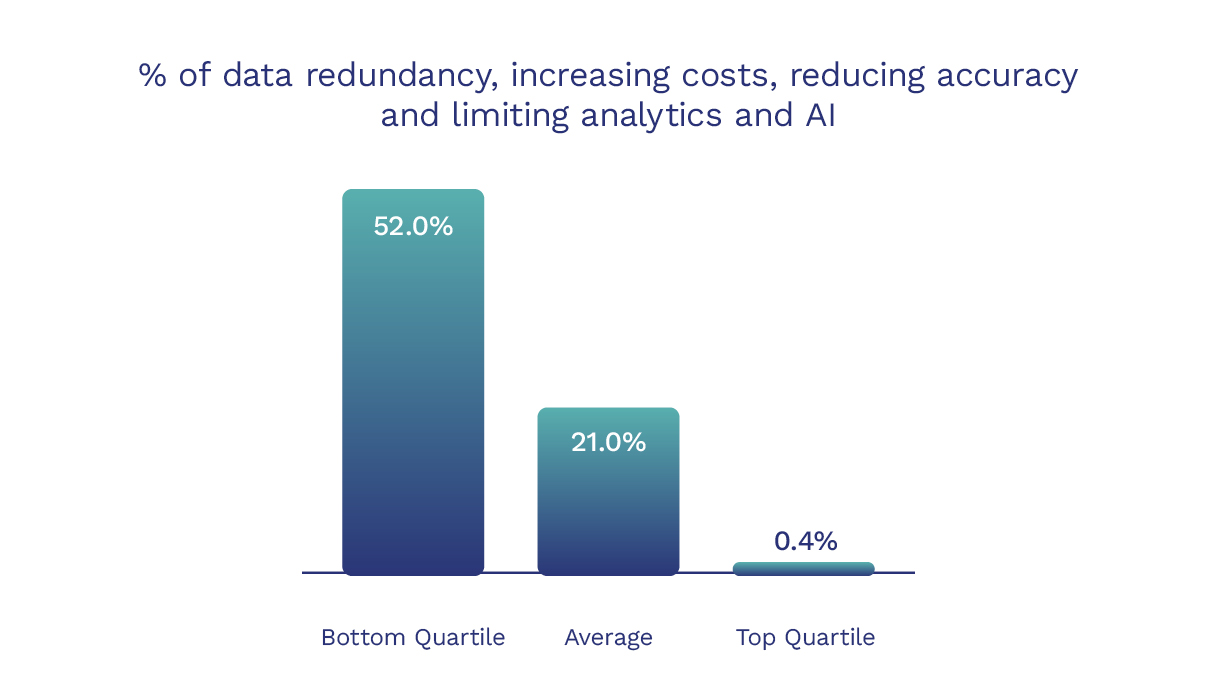

While “data is the new gold” remains true, the reality for many is much more complex. Data is often fragmented and difficult to access, making it challenging to leverage in meaningful ways. Temenos Value Benchmark data indicate that on average over a fifth of bank data is duplicated [2], increasing costs, reducing accuracy, and potentially limiting analytics and AI. For banks in the bottom quartile, over half of their data is duplicated – potentially a huge drain on bank resources and limiting their ability to implement intelligent services in the future.

In response, financial institutions are increasingly adopting data mesh architectures to organize and extract value. Data mesh architectures are decentralized but well-governed frameworks that ensure data is organized, compliant, and accessible across all business lines, not housed in one function.

Supporting tasks like sharing real-time transaction data for regulatory reporting, data mesh architectures and cloud platforms help to establish the foundations needed to thrive in increasingly automated and AI-driven banking operations.

Banks’ cybersecurity reset: From perimeter to zero trust

The cybersecurity landscape is shifting rapidly, driven by the adoption of AI and advances in quantum computing. Banks’ transition to modern digital banking platforms demands a transition from legacy perimeter controls to a comprehensive zero-trust approach.

Zero trust moves security from the perimeter into access decisions and continuous verification. In practice, it spans device integrity, tighter privileged workforce access, and modernized cryptography to stay ahead of AI-powered and quantum-era risks while meeting evidence-based regulatory expectations.

Mobile device access to banking services is a prime example of a zero-trust security model. Banks increasingly use app and device attestation to cryptographically confirm that banking apps are genuine and running on uncompromised devices. When paired with hardware-backed biometrics and passkeys, these controls secure account data and reduce the risk of fraud compared to legacy credential-based systems.

As banks deploy AI tools internally, they need guardrails to prevent chatbots from manipulation or sharing sensitive data. Key risks include hallucinations that produce incorrect outputs and prompt-injection attacks, where inputs are used to bypass safeguards and can result in leaked sensitive information.

In the organization, zero trust prioritizes controlling privileged access across all users. Banks should use locked-down devices for sensitive tasks and issue permissions only when necessary and for limited periods. These controls, underpinned by role-based access and timely removal as roles change, ensure that only designated personnel can access critical security platforms.

Banks also need to prepare for post-quantum cryptography by adopting cryptographic agility—upgrading encryption for secure connections and access tokens as quantum-safe standards mature, and retiring weak ciphers that allow downgrade attacks. The regulatory landscape is also demanding rigorous technical evidence and tested validation of digital operational resilience. These measures strengthen security, support compliance, and mitigate risk in the evolving quantum and AI era.

By: Bain & Company