What is Explainable Artificial Intelligence (AI)?

A Brief History of Artificial Intelligence

Artificial Intelligence (AI) has the potential to transform how banks operate and the services they provide for customers. But, not all AI is alike. In this blog, we explore what Explainable AI is, the history of artificial intelligence, and why it is superior to other forms of “black box” AI.

What is Explainable AI?

Explainable AI, often shortened to XAI, is a segment of artificial intelligence that values clearly defined and understandable AI processes as highly as the results themselves. Builders of XAI programs seek to create machines that utilize transparent processes.

History of Artificial Intelligence

The term “artificial intelligence” was coined by John McCarthy in 1955 as part of his proposal for an academic summit he organized on the subject. McCarthy spent decades writing about, and experimenting with, artificial intelligence, and encouraged people to wrestle with the implications of this question: what if we could build a machine that could think like us? One of his most important quotes on the subject comes from his 1979 article “Ascribing Mental Qualities to Machines”:

Machines as simple as thermostats can be said to have beliefs, and having beliefs seems to be a characteristic of most machines capable of problem-solving performance.

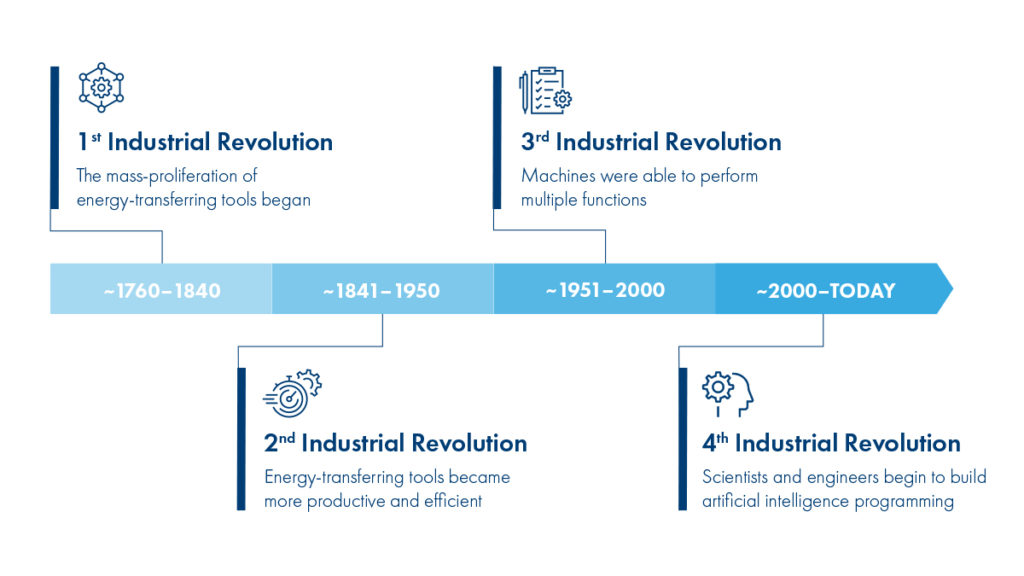

To understand how we arrived at this revolutionary concept, it’s helpful to go back further to trace the history and evolution of machines.

1st Industrial Revolution

During the 1st Industrial Revolution (~1760-1840), the mass-proliferation of energy-transferring tools began. For example, heating water to create steam could then power a steam engine to rotate and move a vehicle. Instead of spending all day digging a ditch, it was more productive to build a machine that could do it in 1/10 the amount of time, for the rest of its useable life. The advances during this time significantly increase the productivity of individuals—but what if these tools could power other, more powerful and productive, machines?

2nd Industrial Revolution

During the 2nd Industrial Revolution (~1841-1950), humans found ways to do just that. Energy-transferring tools were used to create even more productive and specialized tools like the assembly line. At the turn of the century, significant advancements were made to not just create electricity and store electricity, but also to use it as a fuel source to power machines. However, these tools were still only able to perform one function: a wheel could either turn or not turn, a light switch could be either flicked on or off. But what if we could build machines that were able to perform multiple functions?

3rd Industrial Revolution

Humans answered that question during the 3rd Industrial Revolution (~1951-2000). With the advent of mainframe computers and programming, humans were able to build machines that could perform multiple functions or yield different outputs depending on their inputs (the classic “if” statement is a great example). With computers, we created complex programs that could decide or arrive at multiple outputs given an almost endless amount of inputs. However, computers were still limited by one thing—the ability of their creators to create the logic of their program. What if we could create a computer that not only created its own logic but could continue to optimize that logic over time without human intervention?

4th Industrial Revolution

Currently, we sit in the 4th Industrial Revolution (~2,000-?) where scientists and engineers are building programs that can build and learn on their own—artificial intelligence. Yes, humans still have to create the initial logic of these programs, but the goal is that eventually computers can learn and improve faster than humans could ever do on their own. While still in its infancy, the opportunities (and yes, the dangers) presented by AI seem almost endless, so long as humans are able to tweak the machines as they are being perfected. That’s why Explainable AI is so important.

Why XAI is Superior to Black Box Artificial Intelligence

Traditional black box artificial intelligence programs exhibit the following problems:

- Difficult to verify if outputs are “correct”

- Difficult to understand where the AI system “went wrong” and then make improvements

- Difficult to understand creator biases and prejudices

The risks of trusting artificial intelligence today are too great. Very few people are willing to stake their organization or business on outputs that they can’t trust or verify. At Temenos, we see Explainable AI as the safe and powerful way for banks to transform their customer service and financial services operations. To learn more, watch our recent webinar on “Explainable AI – Not Just Desirable but Imperative” when Janet Adams and Hani Hagras discuss why banks need to be leveraging XAI to drive digital transformation.